Getting Started with AI Scene Analysis

Quick Start Guide

AI Scene Analysis is enabled by adding a configuration object to the VOD Encoding configuration and runs automatically during the encoding process with no separate processing steps required.

Note AI Scene Analysis requires Bitmovin VOD Encoder v2.232.0 or later

There are a few ways to try AI Scene Analysis:

1. VOD No-Code Wizard

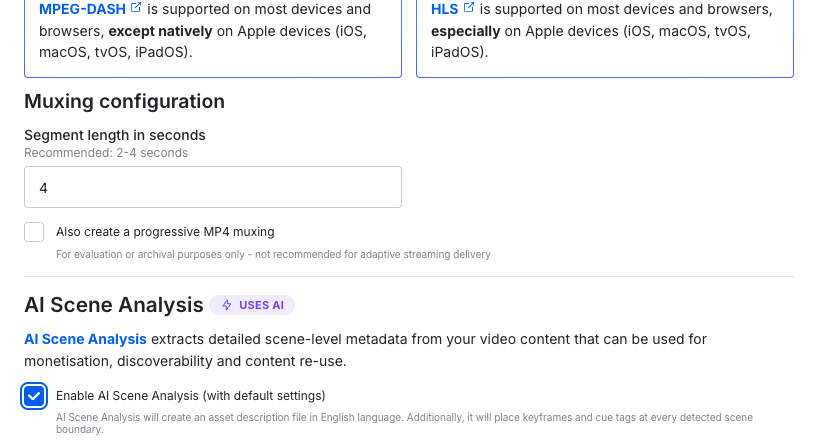

The most simple way to try would be to enable AI Scene Analysis as part of the VOD No-Code Wizard. The wizard is an easy step by step guide to running a VOD encoding, with the option to run AI Scene Analysis available during step 2.

AI Scene Analysis option in the VOD Encoding No-Code Wizard

2. Quick Start Python Script

Take the below python script to run a simple encoding and get the AI Scene Analysis output to a local directory. The script uses the VOD Encoding API Python SDK

"""

Bitmovin AI Scene Analysis (AISA) — Quick Start

Runs a minimal VOD encoding with AI Scene Analysis enabled.

The encoding config is intentionally simple (240p H.264) because

the encoding output quality is redundant as AISA processes the input file.

Usage:

pip install bitmovin-api-sdk python-dotenv

export BITMOVIN_API_KEY="your-api-key"

export BITMOVIN_OUTPUT_ID="your-output-id"

python aisa_quickstart.py "https://example.com/video.mp4" "My Asset Name"

The input URL can be an MP4 file, HLS manifest (.m3u8), or DASH manifest (.mpd).

The optional second argument sets the output filename (e.g. "My Asset Name" → my-asset-name.json).

"""

import json

import os

import pathlib

import re

import sys

import time

import urllib.parse

from bitmovin_api_sdk import (

BitmovinApi,

Encoding,

HttpsInput,

H264VideoConfiguration,

PresetConfiguration,

Stream,

StreamInput,

MuxingStream,

Fmp4Muxing,

EncodingOutput,

AclEntry,

AclPermission,

StartEncodingRequest,

AiSceneAnalysis,

AiSceneAnalysisFeatures,

AiSceneAnalysisAssetDescription,

CloudRegion,

Status,

MessageType,

)

# ── Config ──────────────────────────────────────────────────────────

API_KEY = os.environ.get("BITMOVIN_API_KEY")

OUTPUT_ID = os.environ.get("BITMOVIN_OUTPUT_ID")

if not API_KEY:

sys.exit("Error: set BITMOVIN_API_KEY environment variable")

if not OUTPUT_ID:

sys.exit("Error: set BITMOVIN_OUTPUT_ID environment variable")

api = BitmovinApi(api_key=API_KEY)

def slugify(name: str) -> str:

"""Convert a human-readable name to a filename-safe slug."""

return re.sub(r"[^a-z0-9]+", "-", name.lower()).strip("-")

def run(input_url: str, name: str | None = None):

# Parse the URL into host + path so we can create an HTTPS input.

# This works for direct MP4 files as well as HLS/DASH manifests.

parsed = urllib.parse.urlparse(input_url)

host = parsed.hostname

input_path = parsed.path

if parsed.query:

input_path += f"?{parsed.query}"

# 1. Input — points to the server hosting the source asset

https_input = api.encoding.inputs.https.create(

HttpsInput(host=host, name="AISA quickstart input")

)

# 2. Output Codec config — minimal 480p H.264 (output encoding quality doesn't affect AISA)

codec = api.encoding.configurations.video.h264.create(

H264VideoConfiguration(

name="H.264 480p",

preset_configuration=PresetConfiguration.VOD_STANDARD,

height=480,

bitrate=1_200_000,

)

)

# 3. Create Encoding

encoding = api.encoding.encodings.create(

Encoding(name="AISA quickstart", cloud_region=CloudRegion.AUTO)

)

# 4. Stream details — input & output codec config

stream = api.encoding.encodings.streams.create(

encoding_id=encoding.id,

stream=Stream(

input_streams=[StreamInput(input_id=https_input.id, input_path=input_path)],

codec_config_id=codec.id,

),

)

# 5. Muxing — required for the encoding to produce output

api.encoding.encodings.muxings.fmp4.create(

encoding_id=encoding.id,

fmp4_muxing=Fmp4Muxing(

segment_length=4,

streams=[MuxingStream(stream_id=stream.id)],

outputs=[

EncodingOutput(

output_id=OUTPUT_ID,

output_path=f"aisa-quickstart/{encoding.id}/",

acl=[AclEntry(permission=AclPermission.PUBLIC_READ)],

)

],

),

)

# 6. Start Encoding with AI Scene Analysis enabled

api.encoding.encodings.start(

encoding_id=encoding.id,

start_encoding_request=StartEncodingRequest(

ai_scene_analysis=AiSceneAnalysis(

features=AiSceneAnalysisFeatures(

asset_description=AiSceneAnalysisAssetDescription(

filename="asset-description.json",

)

)

)

),

)

print(f"Encoding started: {encoding.id}")

print("Waiting for completion...")

# Poll until finished

while True:

status = api.encoding.encodings.status(encoding_id=encoding.id)

if status.status in (Status.FINISHED, Status.ERROR):

break

print(f" {status.status.value}...")

time.sleep(10)

if status.status == Status.ERROR:

errors = [m.text for m in (status.messages or []) if m.type == MessageType.ERROR]

sys.exit(f"Encoding failed: {errors}")

# 7. Fetch the AISA results from the API and save locally

print("Fetching AI Scene Analysis results...")

aisa_result = api.ai_scene_analysis.analyses.by_encoding_id.details.get(

encoding_id=encoding.id

)

output_dir = pathlib.Path(__file__).parent / "aisa_output"

output_dir.mkdir(parents=True, exist_ok=True)

filename = f"{slugify(name)}.json" if name else f"{encoding.id}.json"

output_file = output_dir / filename

with open(output_file, "w") as f:

json.dump(aisa_result.to_dict(), f, indent=2)

print(f"Done! AISA results saved to: {output_file}")

if __name__ == "__main__":

if len(sys.argv) < 2 or len(sys.argv) > 3:

sys.exit(f"Usage: python {sys.argv[0]} <input-url> [name]")

run(sys.argv[1], sys.argv[2] if len(sys.argv) == 3 else None)

3. Add to your existing Encoding Config

Add the aiSceneAnalysis object to your encoding Start call or into an Encoding Template:

aiSceneAnalysis:

features:

assetDescription:

filename: asset-description.json

#the following are optional to output the analysis as a JSON file

outputs:

- outputId: <output-id>

outputPath: ai_content_analysis/{encodingId}

acl:

- permission: PUBLIC_READ

{

"aiSceneAnalysis": {

"features": {

"assetDescription": {

"filename": "asset-description.json",

"outputs": [

{

"outputId": "<your-output-id>",

"outputPath": "ai_content_analysis/{encodingId}",

"acl": [

{

"permission": "PUBLIC_READ"

}

]

}

]

}

}

}

}Retrieving Your Metadata

Once encoding completes, retrieve metadata in three ways:

1. Direct from Storage

If you configured output to your cloud storage:

# Example S3 path

s3://your-bucket/ai_content_analysis/<encoding-id>/asset-description.json

# Example GCS path

gs://your-bucket/ai_content_analysis/<encoding-id>/asset-description.json2. Via Bitmovin API

curl --request GET \

--url https://api.bitmovin.com/v1/ai-scene-analysis/analyses/by-encoding-id/{encoding_id}/details \

--header 'X-Api-Key: <YOUR_API_KEY>' \

--header 'accept: application/json'Understanding Your Results

The metadata output is a JSON file with this structure:

{

"scenes": [

{

"title": "Scene Title",

"startInSeconds": 0,

"endInSeconds": 198.133,

"id": "uuid",

"type": "MAIN_CONTENT",

"content": {

"characters": [...],

"objects": [...],

"settings": [...]

},

"summary": "Brief scene description",

"verboseSummary": "Detailed scene description",

"keywords": [...],

"sensitiveTopics": [...],

"iab": {

"version": "3.0",

"contentTaxonomies": [...],

"adOpportunityTaxonomies": [...],

"sensitiveTopicTaxonomies": [...]

}

}

],

"description": "Overall asset description",

"keywords": [...],

"ratings": [...],

"sensitiveTopics": [...],

"iabSensitiveTopicTaxonomies": [...],

"metadata": {

"disclaimer": "Generated by Bitmovin AI",

"version": "0.0.0"

}

}Common Use Cases

Contextual Advertising

- Enable AI Scene Analysis with IAB taxonomies

- Pass IAB codes to ad server for targeting

- Display contextually relevant ads

Auto Ad Placement

- Define ideal ad schedule (e.g., every 5 minutes)

- Configure automatic ad placement with reasonable deviation

- SCTE markers inserted automatically during encoding

- Feed output to AWS MediaTailor or similar SSAI solution

Content Discovery

- Extract scene metadata for entire library

- Ingest into search engine or recommendation system

- Use keywords and IAB taxonomies for matching

- Enable scene-level search and recommendations

Additional Resources

- API Reference: https://developer.bitmovin.com/encoding/reference

- Dashboard: https://dashboard.bitmovin.com

- Support: [email protected]

- Sales: Contact your account team for pricing and custom arrangements

Updated about 2 months ago